Get a domain

Have a website idea? With 400+ domain extensions (everything from .com to .coffee), you'll never run into the old my-name-isn't-available problem.

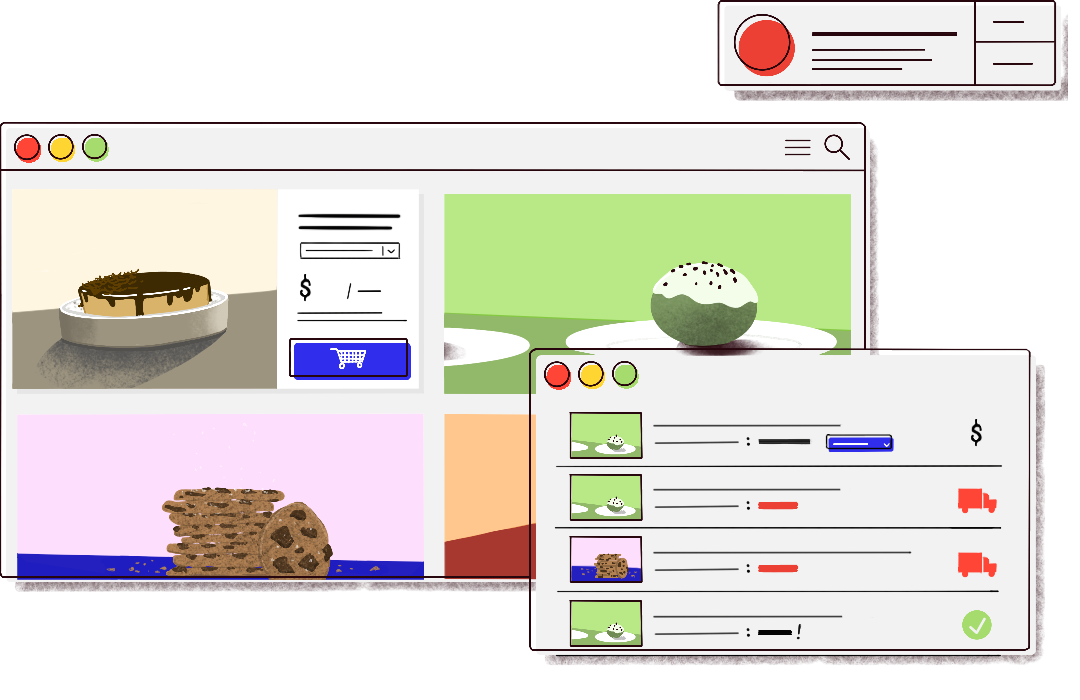

Put it to work

Get building faster with 100+ automatic DNS plugins for popular services like Fastmail, Shopify, and Bitly (or you can use our full DNS manager to do it all yourself).

Come back for more

Have another idea? We'll be here when you're ready. We've been around since 2008, and plan on being here for decades more.